Technologies of Control and Our Right of Refusal

By Seeta Peña Gangadharan

Refusal is an important part of how we challenge pervasive data collection and data-driven systems. In the below transcript (remarks prepared for a TedxLondon talk), Seeta Peña Gangadharan introduces Jill, Bebop, Mika, and Sam, all of whom have shared their stories of control and refusal with Our Data Bodies.

Bring to mind those first times you interacted with digital technology. Was it a video game, a dial-up modem, an email? Where were you? And, most importantly, what did it feel like? For me, my strongest memory is of connecting to the internet for the first time in 1992 using something called a telnetting application. I was sitting in the computer room of my college dorm, and I remember being excited, feeling empowered and ready-to-explore the digital world.

I’ve felt so strongly about the power of the internet that I spent many years advocating for what’s known as digital inclusion or universal access to the internet. The internet, I believed, was like air or water. Everyone deserved the right of access, so they could communicate and inform themselves.

And I still believe that.

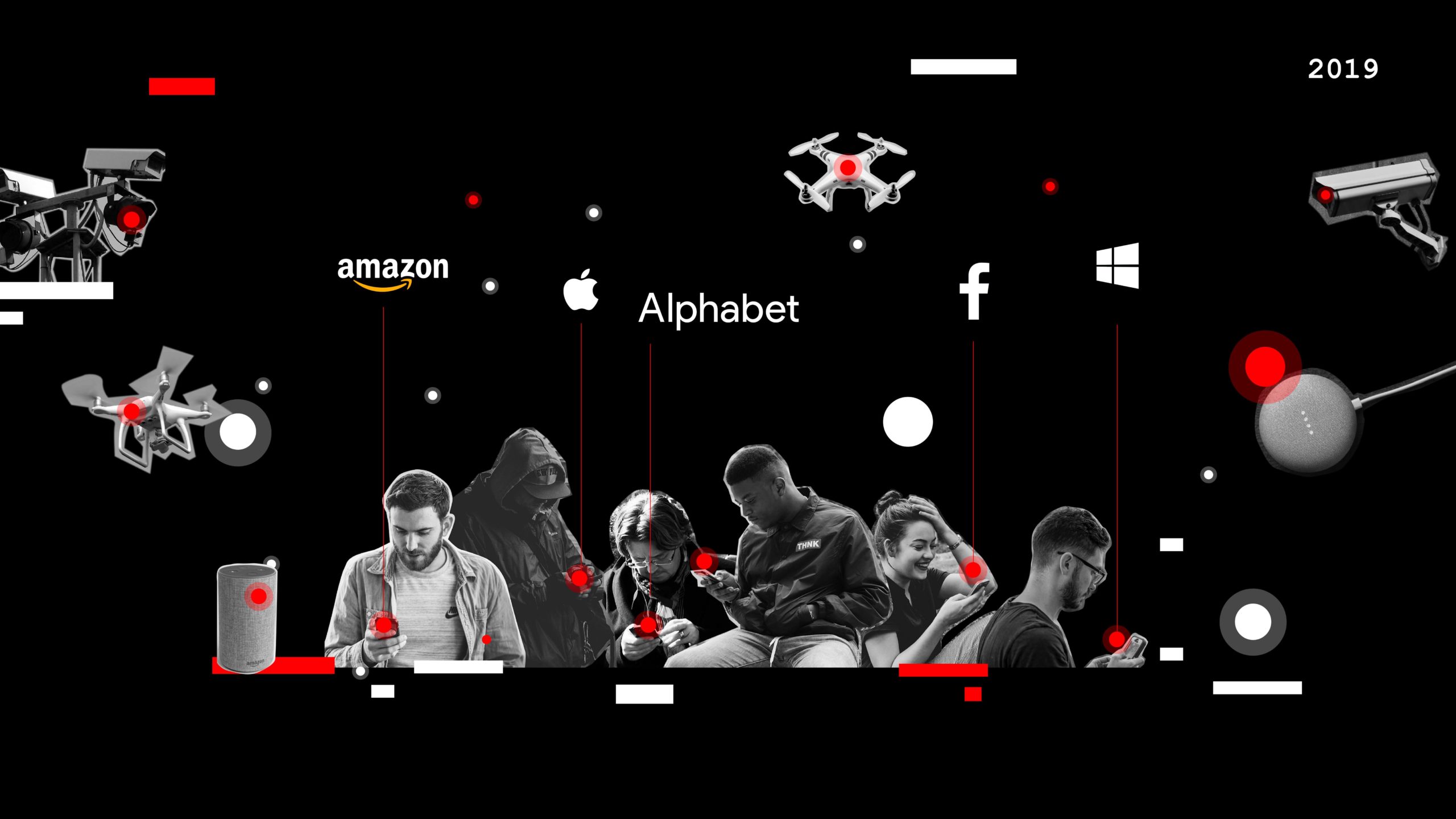

But the internet of the early 1990s is not the internet of 2000s or of 2019. For one, it has become a multibillion dollar industry that reflects a grossly unequal society. Microsoft, Amazon, Facebook, Apple, and Alphabet earned more than $800 billion in revenue in the last year alone.

Second, we generate more data than ever before—2.5 quintillion bytes of data every day, and advancements in data processing power make it possible for computers to discover patterns in our data and predict what we want to do, buy, or see.

Third, we no longer need to go “online” or “on” the internet. Data-driven internet-enabled technologies can be anywhere and can connect us whether we know it or not.

The upshot of all this is that it puts us at risk of new levels of social control. Control by machines—and the people or institutions behind them—can make it difficult for us to exercise our free will, make an independent choice, have power over decisions in our lives.

This realization came to me in 2011. I was working in Washington, DC, at a think tank, promoting universal broadband adoption and starting to wonder about digital privacy. The problem of technologies of control hit home for me one day while conducting research at an organization that teaches computer and internet skills to low-income adults. An instructor at the organization told me about a student who had not been online before and was learning to use a search engine. As a fun exercise, the instructor guided the student to type their name into the search bar. What came back as the first result was an arrest record from years before. To say the least, it wasn’t what she—or the instructor—had expected. In fact, this person’s first encounter of using the internet—her first internet memory—was a representation of herself that wasn’t her own. She didn’t get to define herself. A search engine did, as well as all of the internet users who previously searched for names like hers under the assumption of criminality. She had a digital reputation that literally preceded her, and marked her as a criminal. But arrests are not convictions, and we know from studies of racial profiling that law enforcement disproportionately targets people of color in the United States, especially African Americans.

This woman’s story was one small, but profound piece of evidence as to how technologies of control function. Digital technologies amplify people’s biases. They can easily extend entrenched social problems. And that taints a dominant narrative that our society has held about technologies—that they are neutral tools, that they mean progress, that they benefit humankind.

Today, the problem of technologies as social control is ever more present. In the work of Our Data Bodies, a research collective I jointly lead, we’re interested in the impacts of data collection and data-driven systems on marginalized people. Based in Charlotte, North Carolina, Detroit, Michigan, and Los Angeles, California, Our Data Bodies has interviewed nearly 140 people struggling to get by.

So, in Charlotte, we spoke to people who experienced a rapidly changing city whose benefits were unevenly dispersed. These were people searching for gainful employment, who felt marked by data—such as, a criminal record or a credit score. As Jill, one of our interviewees said:

“I have a criminal background… I pled guilty to worthless checks. It was 2003… [T]hat’s almost 15 years ago, but it’s still held against me, and it still hinders me. It makes it extremely hard to get… permanent employment. Basically, all of my jobs have been temporary positions or contract positions.”

In Detroit, we spoke to residents who experienced the effects of the 2008 financial crisis, a failing local economy, and crumbling public infrastructure including shutoff of utility and water services. These Detroiters felt preyed upon by predatory lenders and even the state seeking to profile and exploit them for being disadvantaged and in debt. As Bebop, a Detroiter, said,

“I know all of my information from experiencing a foreclosure, filing for bankruptcy, utility shutoff—all of that is pretty much documented and is out there for everybody to see when it comes to me being able to live and… provide for myself, my children, and my grandchildren.”

In Los Angeles, we spoke to residents who were predominantly unhoused, in temporary encampments, or living in public housing. Many Angelenos felt welfare and social services monitored their every move in demoralizing and incapacitating ways. Speaking about public housing and data collection in LA, Mika said:

“We have what’s called an annual review where every year even though we’ve applied to be here it’s almost like you have to apply and reassure them that you are poor and doing bad in order to stay here. So it’s not actually made to help you do any better. It’s actually made to keep you right where you are.”

In each of these cities, tech exacerbates a cycle of disadvantage, and the refrain of control is loud and clear. Data collection and data-driven technologies are a set-up. They entrap us. Be they tied to hiring and employment, personal wealth and prosperity, or social welfare, these sociotechnical systems of control set us up for failure.

This problem of control raises the following question: what does it mean and what would it take to be digitally included on our own terms? I think the Charlotteans, Detroiters, and Angelenos we’ve spoken with have an answer. And their primary response is refusal.

People we’ve talked to embody the spirit of refusal. Simply put, they are unwilling to accept data-driven systems in the terms and conditions that government or private actors present to us. They go to staggering lengths to rectify data that mis-categorize them. They inject “noise” into data-driven systems to confuse them. They are dropping out of social media to avoid targeting and abuse. They are focusing on restoring human relationships, finding ways to redistribute resources, and building power not paranoia.

As Sam, a Detroiter, said: “I don’t think I’ve surrendered to the fact that they’re just going to do what they’re going to do.”

These are examples we can and should learn from. But two things currently prevent us from taking these lessons to heart.

First is the prevailing wisdom that “this isn’t my problem” or “it can’t happen to me.” But technologies of control are not the sole domain of the marginalized. Tech companies routinely use dark patterns or deceptive strategies, and we don’t need to go very far for evidence of when a nudge turns to a shove. I’m not just talking about that manipulative free trial you subscribed to, but rather something as profound as casting a vote in a national election.

Second is an ingrained belief that we should turn to technology for solutions. What experts usually say is,

“We need more data or fairer algorithms.”

“We need more diverse technologists.”

“We need kinder, more responsible tech companies.”

But these proposals help us forget that we have the power to act as individuals and act together to challenge the seemingly inevitable uses and ends of technology. Because we all deserve the right to feel joy and freedom not only for our first-time experiences, but also our life-time experiences with technology. And to do that, it might just require that we reject what’s currently on offer. What counts here is that our refusal is about choice, so we can lead lives we value.

So I ask of you: what form of civil disobedience are you going to practice to deny machines—and the people and institutions behind them—the ability to control us? What can you refuse? And what will it feel like?

I’m willing to venture that exercising our right of refusal will define what it means to be an active digital citizen in the current age.